We’ve all seen the projections about how many devices will soon be connected to the internet of things (IoT), says Gowri Chindalore, head of Strategy, Edge Processing, NXP Semiconductors. IDC, for example, predicted the figure will exceed 41 billion by 2025. Much has been written about the opportunities this will unlock to make our homes, work, play and travel more efficient and sustainable.

But the explosion of data that underpins these advances is causing headaches for those creating products, services and supporting infrastructure. Many early IoT devices rely on the cloud to process the data they collect. This model has been driven in part by the effectively limitless compute capacity in the cloud, coupled with the constrained onboard processing capabilities of many IoT devices.

The limitations of offloading to the cloud

Sending data to and from the cloud has its drawbacks, though. Firstly, transmitting data uses energy and bandwidth. More data means you need more of these costly and finite resources. Secondly, sending data to the cloud introduces latency, which limits the effectiveness of certain applications.

Thirdly, offboarding information introduces privacy and security risks. Data collected by smart home devices, for example, will reveal a lot about when you’re at home and when you’re out. If this information is sent to the cloud, can you be sure it’s done securely? Where is it stored, and in what form? Who has access to it?

Introducing the intelligent edge

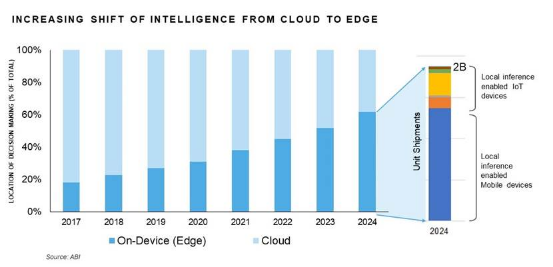

As more devices collect more (and more sensitive) data to be processed, the need to address these challenges is becoming increasingly pressing. This is one of the big drivers behind the rise of the ‘intelligent edge’.

In this model, rather than sending all data to the cloud, key processing and decision-making is done close to the connected device – at the local network’s ‘edge’. This reduces the aforementioned latency, energy consumption and bandwidth use, while enabling users to keep private data within the confines of their own infrastructure.

At the heart of the intelligent edge is machine learning. Currently in this context, we’re talking primarily about inference. This is where the edge device uses a pre-trained machine learning model to make a decision based on new data being collected by local sensors.

Figure 1: Researchers at ABI predict that shipments of devices capable of onboard AI inference is expected to reach two billion by 2024. (Source ABI; image courtesy of NXP Semiconductors)

Driving the shift to AI at the edge

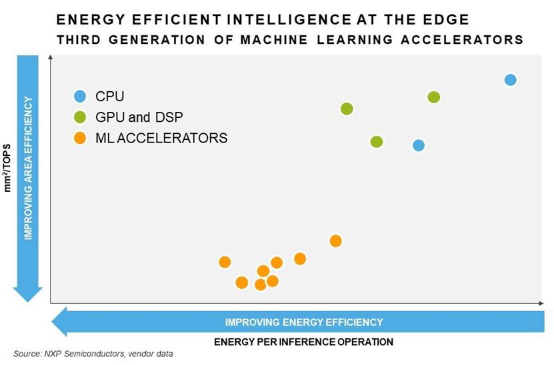

The significant growth of inference in such resource-constrained environments is made possible by improvements to inference processing, and specifically the technologies used to accelerate it.

The first generation of machine learning accelerators – if they were accelerators at all – were largely software-based, with a CPU running an instruction set. The second generation introduced dedicated hardware, such as GPUs and DSPs. Today, we have the third generation, which uses features such as hardware-based pruning and compression. The more of this that gets done in the hardware, the more energy-efficient the process becomes, since you reduce the use of software and CPU cycles.

Figure 2: Energy efficiency improvements are seen with machine learning accelerators. (Source: NXP Semiconductors)

What today’s intelligent edge can enable

As humans, much of our communication is delivered using more than just words: our tone, facial expressions and hand gestures all contribute to the way we instinctively communicate and understand one another. Using edge-based inference, today’s designers can enable their products to pick up on these signals, thereby crafting increasingly natural-feeling interaction experiences. Techniques can include facial and other object- and gesture-recognition, voice-recognition, tonal analysis and natural language processing.

Elsewhere, intelligent edge devices can enhance safety. For example, smart home edge kit could be trained to recognise danger signals, such as alarms going off, a person’s fall, glass breaking or a tap left dripping or running. On sensing the problem, the system could then alert the owner, enabling them to react accordingly.

What comes next?

The next few years will likely see many new IoT products and services coming to market that leverage this increasingly capable intelligent edge.

We talked about how we’re currently at the third generation of AI-acceleration capabilities. Future generations could include neuromorphic or in-memory computing, spiking neural networks or, eventually, quantum AI. These developments will help accelerate another trend that’s currently emerging, which is the ability to implement the actual training of machine learning algorithms at the edge.

It promises to be an exciting time for designers, engineers, businesses and consumers alike, with our technology becoming more helpful, more secure and more sustainable.

The author is Gowri Chindalore, head of Strategy, Edge Processing, NXP Semiconductors.

Comment on this article below or via Twitter: @IoTNow_OR @jcIoTnow