As industry players look to provide the next generation of IoT connectivity, two different standards have emerged under release 13 of 3GPP – CAT-M1 and NB-IoT.

NB-IoT vs Cat-M2

Subsequently, the market became fragmented and it is fair to say that confusion abounds. Indeed, the efforts being made to further each standard as well as the time and money at stake are pushing chipmakers, hardware providers and service networks to examine carefully each option. First, let’s look at some of the objective differences in the chart below.

| Parameter | CAT-M1 (CAT-M) | NB-IoT |

| Bandwidth | 1.4MHz | 200KHz |

| Modes of Operation | In-band | In-band, Guard-band, standalone (GSM bands) |

| Duplex Mode | HD-FDD / FDD / TDD | HD-FDD (TDD under discussion) |

| Peak Data Rate | 375Kbps (HD-FDD), 1Mbps (FDD) | ~50kbps for HD-FDD (not decided yet in 3GPP) |

| UL Transmit Power | 23dBm 20dBm | 23dBm, lower power under discussion |

| VoLTE support | Will be supported | Not supported |

| Mobility support | Full mobility support | No connected mobility (only idle mode reselection) |

| TTM | 6-9 month advantage (estimated) | Standard is not finalised yet Some aspects postponed to R14 |

As we can see, Cat M-1 has the advantage in peak data rate as well as time-to-market, while NB-IoT has greater flexibility in spectrum that can be utilised and modes of operation.

Of course, the key parameters that most interest providers are performance, cost and power. The current market perception is that NB-IoT offers better coverage, lower power consumption and is significantly lower cost. However, a closer, more critical look at the data suggests that this is not the technical reality. Let’s delve into these 3 important KPIs from a technical standpoint, says Itay Lusky, senior director of Strategic Product Marketing at Altair Semiconductor.

Performance of Cat-M1, Cat-M, NB-IoT, Cat-M2

Maximum coupling loss (MCL) is defined as the maximal total channel loss between User Equipment (UE) and eNodeB (eNB) antenna ports at which the data service can still be delivered. Practically, it includes antenna gains, path loss, shadowing and any other impairments. The higher the MCL, the more robust the link is.

According to 3GPP, the MCL for CAT-M1 is 155.7 dB while NB-IoT is 164 dB – an extraordinary difference of more than 8 dB. On the surface, this would indicate a significant advantage for NB-IoT’s performance. Yet, this comes as a surprise because according to the Shannon Theory, low SNR approximation capacity is independent of bandwidth if the noise is white.

As a result, we would have expected:

- Similar coverage in uplink assuming the same total transmit power

- x6 (~8dB) better coverage for CAT-M1 in downlink as incoming eNB signal energy is x6 larger due to the larger bandwidth used

Indeed, a closer look at the reference scenario definition reveals that the MCL in the two standards were defined using different transmit power, Noise Figure and target throughput assumptions, making it an uneven comparison. This can be seen in below table.

| CAT-M1 | NB-IoT | |||

| References | 3GPP 36.888, RP-150492 | 3GPP 45.820 7A | ||

| Downlink | Uplink | Downlink | Uplink | |

| Tx Power | 46dBm/9MHz | 23dBm | 43dBm/180kHz | 23dBm |

| Noise Figure | 9dB | 5dB | 5dB | 3dB |

If instead we use the same assumptions (equal Tx power, Noise Figure and target throughput), we will see that the above expectations hold: in UL both standards have the same coverage, and in DL CAT-M1 has ~8dB better coverage than NB-IoT.

In practice, when we consider frequency hopping and turbo/coding features present in the CAT-M1 standard then CAT-M1’s advantage is even further revealed.

Cost

NB-IoT is perceived to have a substantially lower cost structure compared to CAT-M1, which is crutial in products like, smart trackers, sensors and smart meters.

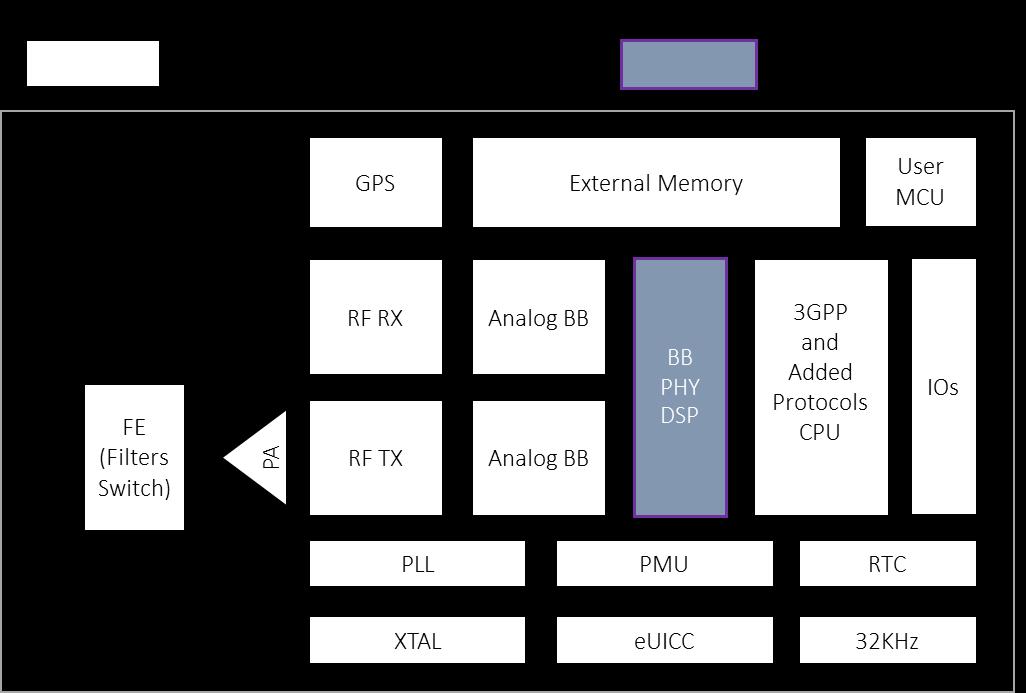

The below diagram of a typical modem will help us evaluate this claim.

The block diagram shows common building blocks of a typical module design. This includes RF blocks (such as filters, switches, PA, transmit and receive chains etc.), transmit and received analog blocks, baseband (“BB”), processor handling protocol implementation, memory, other servicing blocks (crystals, Power Management Unit- PMU, eUICC support, Real Time Clock- RTC) and optional blocks (such as GPS and MCU).

Most of the blocks, marked in white, do not change as a functions of 3GPP standard used.

This holds true assuming there is an apples-to-apples comparison between technologies (i.e. same number of bands, same operator added services, same added capabilities such as integrated GPS, MCU etc.).

The main block that is changed between technologies is the baseband Physical Layer (PHY) in charge of the Digital Signal Processing (DSP) of the modem.

![]() The baseband PHY block size may be substantially reduced by moving from 1.4Mhz processing to 200KHz processing. However, given current technology the difference stands at ~10 cents cost delta, which is ~2% of target module prices for 3GPP R13 technologies. That gap will become even smaller in about 2-3 years when technology matures taking into account technology shrinkage following Moore’s law.

The baseband PHY block size may be substantially reduced by moving from 1.4Mhz processing to 200KHz processing. However, given current technology the difference stands at ~10 cents cost delta, which is ~2% of target module prices for 3GPP R13 technologies. That gap will become even smaller in about 2-3 years when technology matures taking into account technology shrinkage following Moore’s law.

In short, NB-IoT does have a cost advantage over CAT-M1, however it is much smaller than the current industry perception.

Power

Power consumption in IoT devices is comprised of both standby and active power consumption.

Standby power consumption is a function of design and technology used, and essentially should not differ between CAT-M1 and NB-IoT. Active power consumption does differ among the two technologies. It is essentially the multiplication of transmitted power density and the length of transmission.

Starting with DL active power consumption, CAT-M1 has substantially higher throughput support (both x6 in bandwidth and higher modulation support) than NB-IoT. As a result, UE time for specific data to be received is substantially smaller, resulting in an estimated 50% lower active power consumption than NB-IoT.

For UL, in good channel conditions CAT-M1 has lower active power consumption due to its higher modulation support. In limited channel conditions NB-IoT is superior to CAT-M1 due to its support of single tone transmission. That benefit is likely to be closed in 3GPP R14.

To summarise, CAT-M1 has lower active power consumption in DL and UL in good channel conditions. For UL limited channel conditions NB-IoT today has better active power numbers.

Conclusion

Both CAT-M1 and NB-IoT are being pursued aggressively to become the de-facto connectivity solution for IoT products. While both standards fare well in different scenarios, it is critical not to take market perceptions at face value but rather compare both solutions evenly, all things being equal, in order to make the right technology decisions.

We analysed three key KPIs including coverage, cost and power consumption. While the market perception is that NB-IoT has a clear advantage over CAT-M1 for these KPIs, we conclude that CAT-M1 actually offers advantages for coverage and power, and only a minimal cost disadvantage when compared to NB-IoT.

Future platforms that support both CAT-M1 and NB-IoT may ultimately allow providers to hedge their bets, but until then it is crucial to understand the technical data and consider the real added-value before choosing.

The author of this blog is Itay Lusky, senior director of Strategic Product Marketing at Altair Semiconductor

About the Author:

Itay Lusky is the senior director of Strategic Product Marketing at Altair Semiconductor, a leading provider of single-mode LTE chipsets. Altair’s portfolio covers the complete spectrum of cellular 4G market needs, from supercharged video-centric applications all the way to ultra-low power, low cost IoT and M2M. Altair has shipped millions of LTE chipsets to date, commercially deployed on the world’s most advanced LTE networks.

Comment on this article below or via Twitter: @IoTNow_ OR @jcIoTnow