In the pre-digital era, IT departments mastered a variety of technological approaches to extract value from data. Data warehouses, analytical platforms, and different types of databases filled data centres, accessing storage devices where records were safely preserved on disk for their historical value.

By contrast, says Kelly Herrell, CEO of Hazelcast, data today is being generated and streamed by Internet of Things (IoT) devices at an unprecedented rate. The “Things” in IoT are innumerable — sensors, mobile apps, connected vehicles, etc. — which by itself is explosive. Add to that the “network effect” where the degree of value is directly correlated to the number of attached users, and it’s not hard to see why firms like IDC project the IoT market will reach US$745 billion (€665 billion) next year and surpass the $1 trillion (€0.89 trillion) mark in 2022.

This megatrend is disrupting the data processing paradigm. The historical value of stored data is being superseded by the temporal value of streaming data. In the streaming data paradigm, value is a direct function of immediacy, for two reasons:

- Difference: Just as the unique water molecules passing through a length of hose are different at every point in time, so is the unique data streaming through the network for each window of time.

- Perishability: The opportunity to act on insights found within streaming data often dissipates shortly after the data is generated.

The concepts of difference and perishability apply to this streaming data paradigm. Sudden changes detected in data streams demand immediate action, whether it’s a pattern hit on real-time facial recognition or drilling rig vibration sensors suddenly registering abnormalities that could be disastrous if preventive steps aren’t taken immediately.

In today’s time-sensitive era, IoT and streaming data are accelerating the pace of change in this new data paradigm. Stream processing itself is rapidly changing.

Two generations, same problems

The first generation of stream processing was based largely on batch processing using complex Hadoop-based architectures. After data was loaded — which was significantly after it was generated — it was then pushed as a stream through the data processing engine. The combination of complexity and delay rendered this method largely insufficient.

The second generation, (still largely in use), shrunk the batch sizes to “micro-batches.” The complexity of implementation did not change, and while smaller batches take less time, there’s still delay in setting up the batch. The second generation can identify difference but doesn’t address perishability. By the time it discovers a change in the stream, it’s already history.

Third-generation stream processing

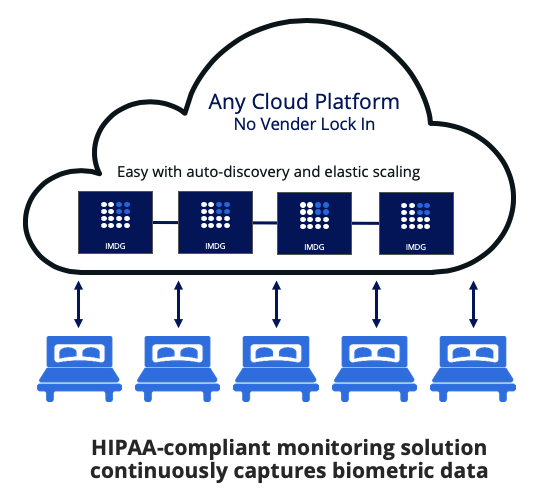

The first two generations highlight the hurdles facing IT organisations: How can stream processing be easier to implement while processing the data at the moment it is generated? The answer: software must be simplified, not be batch-oriented, and be small enough to be placed extremely close to the stream sources.

The first two generations of stream processing require installing and integrating multiple components, which results in too large of a footprint for most edge and IoT infrastructures. A lightweight footprint allows the streaming engine to be installed close to or embedded at the origination of the data. The close proximity removes the need for the IoT stream to traverse the network for processing, resulting in reduced latency and helping to address the perishability challenge.

The challenge for IT organisations is to ingest and process streaming data sources in real-time, refining the data into actionable information now. Delays in batch processing diminish the value of streaming data. Third-generation stream processing can overcome latency challenges inherent in batch processing by working on live, raw data immediately at any scale.

Streaming in practice

A drilling rig is one of the most recognisable symbols of the energy industry. However, the operating costs of a rig are incredibly high and any downtime throughout the process can have a significant impact on the operator’s bottom line. Preventive insights bring new opportunities to dramatically improve those losses.

SigmaStream, which specialises in high-frequency data streams generated in the drilling process, is a good example of stream processing being implemented in the field. SigmaStream customer rigs are equipped with a large number of sensors to detect the smallest vibrations during the drilling process. The data generated from these sensors can reach 60 to 70 channels of high-frequency data entering the stream processing system.

By processing the information in real-time, SigmaStream enables operators to execute on these data streams and immediately act on the data to prevent failures and delays. A third-generation streaming engine, coupled with the right tools to process and analyse the data, allows the operators to monitor almost imperceptible vibrations through streaming analytics on the rig’s data. By making fine-tuned adjustments, SigmaStream customers have saved millions of dollars and reduced time-on-site by as much as 20%.

In today’s digital era, latency is the new downtime. Stream processing is the logical next step for organisations looking to process information faster, enable actions quicker and engage new data at the speed at which it is arriving. By bringing stream processing to mainstream applications, organisations can thrive in a world dominated by new breeds of ultra-high-performance applications and deliver information with the time-sensitivity to meet rising expectations.

The author is Kelly Herrell, CEO of Hazelcast

Comment on this article below or via Twitter: @IoTNow_OR @jcIoTnow